Recently, but not shockingly, the internet and its consumers entered a social media-driven craze over a plush monster toy, labubus. The cute, plush monster took over the internet and social media platforms like Tiktok and Instagram, integrating into one of 2025’s latest fashion trends. Created by Kasing Lung in 2015, a labubu is a fictional character that Lung transformed into collectibles by entering into a licensing agreement with Pop Mart in 2019.

Blackpink’s star Lisa, and celebrities like Rihanna, Dua Lipa, and Kim Kardashian have all contributed to the fame of this doll — making it one of 2025’s most sought after trends. Aside from the various celebrities and influencers that are attributed to the Labubu’s popularity, the collectibles are also sold in what are referred to as “blind boxes”. Essentially, the color and type of the labubu is revealed only after the blind box is bought and received by the buyer – adding to the excitement and anticipation behind finding a rare figure. Labubus were seen all over Instagram and Tiktok, going “viral” as people created memes of them, posted videos unboxing them, and incorporated them into their fashion style.

The head of licensing at Pop Mart North America, Emily Brough, disclosed that such blind boxes generated more than $419 million in revenue in 2024 — achieving 726.6% year-over-year growth. The generated increased revenue can be significantly attributed to TikTok’s platform, considering as of April 2025, Pop Mart generated $4.8 million in sales on TikTok Shop, a rise of 89% in only one month. The doll rapidly became ultra-desirable on the internet. Although the collection retails at approximately $27 in the U.S., resellers typically double the price on the market, such as $149 on e-bay for a rare Chestnut Cocoa Labubu. Not only do blind boxes play into the fascination behind a Labubu, but resellers also create the concept of exclusivity for the collectibles that ultimately attracts even more consumers. For consumers, Labubus are more than a fashion accessory; owning a Labubu symbolizes being involved and up to date with the current trends, being relatable to other consumers and influencers, and buying something that is highly sought-after.

With a product like Labubus going viral, it is natural to wonder what exactly the standard is for something to be considered viral. The Merriam-Webster dictionary describes “viral” as something that is “quickly and widely spread or popularized especially by means of social media”. Although such definition can be broadly applied, the Merriam Webster dictionary explains the simple, core attributes to something that is viral: 1) it is quickly spread, and 2) it is popular. Something can be popular but gain popularity throughout an extended period of time – but what distinguishes a viral product from a typical popular product is the rapid pace the product gains recognition. It could happen in a timeline of a few months, weeks, days, and even overnight. Essentially, a product or trend becomes viral when the promoter of the product creates highly engaging and shareable content that taps into the emotional connection of their audience, who then tap, click, like, comment, and share about the product. The viewer engages more with the post, and the product being mentioned spreads widely throughout the social media universe, quickly earning that viral title.

Why should the latest trend of Labubus not be considered shocking? The concept of a plush monster being ultra-desirable and extremely sought-after going viral on the internet might not be expected by everyone; but it is important to note that the common ground behind a majority of viral products is the internet. Labubus are only one example of a nearly endless list of trends and viral products social media has boosted. The same effect social media had on Labubus, it also had on Stanley cups in 2023. Viral videos of Stanley cups circulated TikTok, resulting in a significant jump in Stanley’s revenue from approximately $94 million in 2020 to $750 million in 2023. It is clear that social media platforms are an underlying basis for viral products because of the easy access to posts and videos, alongside the individualized algorithms, content creators, celebrities, and e-community that social media platforms like TikTok and Instagram provide.

It is no secret that celebrities and influencers use social media platforms like Tiktok and Instagram to promote products as part of their brand endorsements. Inevitably, viewers and followers of such celebrities are influenced, resulting in the lifecycle of a trend. The trend typically becomes viral quickly, with much contribution associated to social media algorithms as well, but the product trend cycle is rarely long-term.

Often, these social media trends that come and go are referred to as “micro-trends”; essentially, micro-trends refer to short-lived trends that gain a high amount of attention in a fairly short period outside of a traditional trend cycle, and ultimately lose public relevance just as fast as they gained it. Micro-trends are advertised through social media as consumer must-haves, creating the ripple effect that consumers feel like they need to buy, buy, buy. The shortened lifecycle of viral products and micro-trends have resulted in a long-term cycle amongst consumers to buy them. It is a full circle of a product going viral, that turns into a micro-trend, leads into overproduction and inevitable overconsumption, creating a higher demand in markets that destabilize economies.

The issue is that micro-trends are highly associated with the issue of overconsumption that results in companies’ fast production and release of products to keep up with trends. This issue of overconsumption is accompanied by the rapid and disposable use of micro-trend related products, adding to the broader waste problems that already exist in, for example, the fashion industry. Further, these micro-trends impact the longevity of businesses. The quick turnover of consumers losing interest after these trends hit their highest popularity impacts local businesses from keeping up with the rapid production necessary for micro-trends to exist. Simply put, micro-trends are not sustainable for consumers, businesses, and the environment. For example, fast fashion clothing associated with such micro-trends are commonly received from the Kantamanto Market in Accra, Ghana, where about 40% of the clothing leaves as waste.

The want and need by consumers to be part of current micro-trends can always be drawn back to social media. Moving away from magazines like Vogue or Elle, social media platforms like TikTok have progressed into the new resource for consumers to find the newest and most popular trend. Social media algorithms create echo chambers of specific trends by identifying when certain style gains recognition and then feeding users with similar tastes; and from there a micro-trend is born. The algorithm identifies specific trends by recognizing which posts receive the most engagement (what content is viral). The more a post is shared, liked, or commented on, the faster it will spread. These algorithms typically have a faster trend turnaround because users of such platforms have access to almost instant updates of what is trending and what is popular – leading into a loophole of doomscrolling and impulsive spending. Trends are appearing in algorithms at a higher pace and demand than supply chains can respond to. With social media apps and their algorithms, consumers have almost instant access to finding micro-trends and buying into them; and almost instant access creates instant gratification for consumers.

Algorithms are not the only role in the social media realm that contributes to the viral impact on businesses. Now, social media platforms have also progressed into the new digital storefront, serving as a place to both look and buy. It is simple: open the app, scroll, click, and buy it. E-commerce platforms like Instagram and Tiktok have individualized and specific storefronts to make it easier for their users to buy into the most viral, latest trends, and fast. For example, in 2024, TikTok shop had grown to more than 500,000 United States based sellers within the eight months of launching, and had around 15 million sellers worldwide. E-commerce sites like such can benefit companies that prioritize overconsumption, but they also can promote micro-trends. Algorithms and e-commerce sites can have the ability to strongly affect the economy, where in 2024, economists at the Federal Reserve discovered that inflation-adjusted spending on retail goods increased compared to 2018. Additionally, businesses are impacted as consumers are being drawn away from shopping in-person at small, local, and traditional retailers. The overarching economic impact can be conceptualized by the fact that viral products and micro-trends result in temporary, short-term sales, while creating long-term instability in businesses.

With the rise of e-commerce in social media, also comes the rise of issues for consumers. Whether in store or online, consumers have the right to safety, to be informed, to choose, to be heard, and to redress. To protect consumers, businesses can provide clear, transparent information about their products; maintain fair transactions; hold themselves accountable for the safety of their products; and protect the privacy of their consumers. Given the large volume of transactions taking place on e-commerce sites, it becomes a challenge to accurately and properly regulate and monitor all transactions to protect against any and all issues that may arise. Consumers are now concerned with where the personal information they share is going, avoiding cyber fraud and scams, and receiving low quality products.

Even further, new issues regarding consumer safeguards such as intellectual property concerns are introduced. For example, with social media’s rapid spread of products and trends, copycat products are becoming increasingly more common. A copycat product is a product that is designed, branded, or packaged to resemble exactly the like of a well-established competitor. Copycat products are created deliberately, to use the established brand’s identity and reputation and market off that. The legal implications associated with copycat products include trademark infringement, unfair competition, and consumer fraud liability. Brands will reproduce viral creator designs without permission and devalue creative labor, and viewers are more susceptible to believing and trusting such copycat products are either associated with the original or of similar quality. However, influencers must be aware that when using social media to share and promote products, and earn that viral title, if the product is a dupe, or a copycat, it could fall under a violation of Section 5(a) of the Federal Trade Commission Act.

In 2020, Amazon filed a lawsuit against two influencers, Kelly Fitzpatrick and Sabrina Kelly-Krejci, alleging they promoted counterfeit products on their social media account. The two influencers were accused of using Instagram, Facebook, and TikTok accounts and working with eleven other individuals/businesses to promote fake luxury items sold on Amazon. The listings were an effort to dupe Amazon’s counterfeit detection tools. Although the lawsuit concluded in a settlement in 2021, and both influencers were barred from marketing, advertising, and promoting products on Amazon, this lawsuit serves as one of the many examples of legal implications that arise from the merge between the power of social media and e-commerce. Specifically for Amazon, their marketplace along with the associated third-party sellers makes up for more than half of their overall e-commerce sales. However, the strong possibility of counterfeits (copycat products) and unsafe products have become a notorious problem extending outside Amazon’s ecosystem and into the entire e-commerce realm.

E-commerce’s part towards overconsumption can be analyzed by looking at the four step process behind purchasing a product: awareness, desire, consideration, and purchase. Because of how quickly products become viral and how fast micro-trends come and go, this four step process for consumers is sped up. Often, consumers will jump from awareness to purchase if the price tag is small enough. Either way, the desire gets created when either the algorithm brings it to the viewer or a content creator references it. Many posts will draw consumers’ attention to e-commerce sites, showing them how easily accessible their shopping can be, by merging social media and e-commerce. Other methods retailers use to draw in consumers are cognitive biases. For example, countdown banners can create an urgency bias amongst consumers that they need the product now; and a social proof bias can push the consumer who is considering to purchase to buy when they see tags highlighting how many people have bought it or how high the ratings are.

Outside the U.S., in February 2025 the EU Commission has commenced an investigation into online company SHEIN’s compliance with EU Consumer laws, urging SHEIN to stop using dark patterns like fake discount and pressure selling. The Commission’s complaint essentially requests SHEIN stops using deceptive techniques such as “confirm shaming” to play into the consumer’s emotions and to provide substantive evidence that shows customer testimonials or messages referring to “low stock” are genuine. The Commission connects SHEIN’s “dark patterns” to the fuel of over-consumption that is environmentally harmful. Such dark patterns play into the cognitive biases that drive consumers to buy. The EU Commission’s efforts into investigating such impacts should be a standard the U.S. takes into account. Although the overwhelming size of the internet and presents issues of controlling its regulation, increasing investigations can be a start in protecting consumers.

Although the progression of e-commerce and social media bring initial yet exciting benefits to consumers, the intricacies should not be overlooked. It is important to identify when our internet moves faster than our market. Viral products and trends may have a short lifecycle, yet their impacts can have the potential to be longstanding for businesses and consumers.

Francesca Rocha

November 12, 2025

Mahmoud Khalil, a lawful permanent resident and recent Columbia University graduate, was arrested by ICE in New York in March 2025 after participating in pro-Palestinian demonstrations. He was detained in Louisiana for over three months pending removal proceedings.

Mahmoud Khalil, a lawful permanent resident and recent Columbia University graduate, was arrested by ICE in New York in March 2025 after participating in pro-Palestinian demonstrations. He was detained in Louisiana for over three months pending removal proceedings.

platform obtains verifiable parental consent or reasonably determines that the user is not a minor. It also bans push notifications and advertisements tied to those feeds between 12 a.m. and 6 a.m. unless parents explicitly consent. The rule making process remains ongoing, and enforcement will likely begin once these standards are finalized.

platform obtains verifiable parental consent or reasonably determines that the user is not a minor. It also bans push notifications and advertisements tied to those feeds between 12 a.m. and 6 a.m. unless parents explicitly consent. The rule making process remains ongoing, and enforcement will likely begin once these standards are finalized.

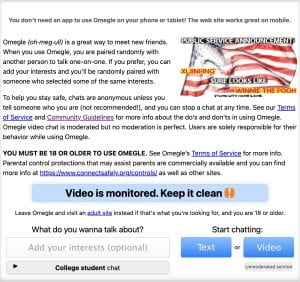

Omegle is a free video-chatting social media platform. Its primary function has become meeting new people and arranging “

Omegle is a free video-chatting social media platform. Its primary function has become meeting new people and arranging “

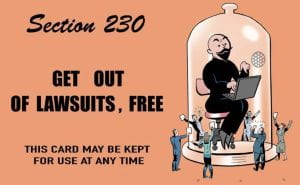

found that Section 230 immunity did not apply to Omegle in a

found that Section 230 immunity did not apply to Omegle in a