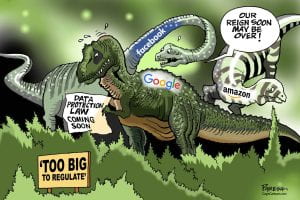

The debate on regulation of social media in the United States is a heated one. Lawmakers, fueled by both theoretical concerns and specific incidences, have proposed and debated legislation to regulate social media platforms, thus far – unsuccessfully. Bills have failed both because of constitutional concerns and lack of appetite. Sen. Amy Klobuchar said the tech lobby is so powerful that bills with “strong, bipartisan support” can fall apart “within 24 hours.

Recently, the Biden administration was prohibited from asking sites to remove misinformation content, with the Federal Appeals Court ruling that doing so was a violation of the first amendment. Additionally, Section 230 is alive and well, shielding media platforms from liability for pretty much anything posted on their sites. This is reflected in the recent cases Twitter, Inc. v. Taamneh and Gonzalez v. Google.

Elsewhere in the world, legislation designed to regulate social media is proposed, approved, awaiting and already in process of implementation. Unlike the US, Europe, the UK and others do not have the high First Amendment bar to overcome, making regulation a realistic possibility. A survey of active regulation and proposed regulation shows determination and promise in tackling some of the greatest concerns that social media poses.

Legislation worldwide has been designed to combat a variety of harms and threats, with each country and legislative body disclosing their most important concerns. The harms range from protecting children from viewing inappropriate content to establishing a level playing field to foster innovation, growth, and competitiveness in business. Most regulation places an onus on social media companies to be vigilant, police their own platforms, and remove content that violates the law. Heavy fines, up to 10% of the platform’s gross global profit would be assessed in fines if violated. A survey of the proposed and adopted legislation regulating social media shows progress toward a goal of a happier and safer online existence for all.

In the European Union

The European Union (EU) has elected a regulatory “one-two punch” to both control anticompetitive behavior of the platforms and to stop misinformation in its tracks.

The Digital Services Act (DSA) and the Digital Market Act (DMA) form a single set of rules that apply across the whole EU. They have two main goals: to create a safer digital space and to establish a level playing field to foster innovation, growth, and competitiveness for smaller and new business.

The DSA, passed in September 2023 and to be implemented in the new year, is designed to address social media’s harms to society. Under the DSA, platforms as well as providers of digital services will be required to more aggressively police their platforms. The DSA imposes reporting and monitoring obligations for illegal content and misinformation. Digital service providers, including social media sites, will be compelled to set up new policies and procedures to identify and remove flagged hate speech, terrorist propaganda and other “material defined as illegal by countries within the European Union.” This includes a duty to monitor and report, mandating internal audits and reporting on them. The DSA also requires platforms to disclose how their services amplify divisive content and stop targeting online ads based on a person’s ethnicity, religion or sexual orientation. Using children’s data for algorithms and advertising is also strictly prohibited. Social media giants are held to an even higher standard under the DSA. Very Large online platforms are held to an even higher standard. Platforms like Meta, X, and TikTok will be compelled to analyze the systemic risks that they can create including dissemination of illegal content, effect on fundamental rights, on elections and electoral processes, and gender-based violence or mental health trends.

Penalties for failure to adhere to the DSA are steep. Violation could be assessed with fines of up to 6% of a platform’s global profits per annum.

The DMA, which passed earlier this year, is meant to protect businesses from anticompetitive behavior by platforms, which negatively affects small businesses. Most of the regulation is aimed at creating a level playing field for businesses using platforms, and to combat the grip of platforms over app stores, advertising, and online shopping. Under the DMA, a platform cannot treat services and products offered by the platform better than those offered by a third party on the platform. They must give users access to the data they collect from the third party and can no longer track users beyond the platform’s own site for use in advertising or prevent third parties from directing users to another site from the platform.

Penalties for violation could soar into the billions. Violators can be fined for up to 10% of the platform’s global profits per annum, and up to 20% for repeat offenders.

To enforce the new laws, the EU plans to form a unit of 240 officers dedicated to the purpose.

United Kingdom

In September 2023, Parliament approved the Online Safety Bill. The bill is designed to prevent children from consuming harmful information online. Under the bill, platforms must act rapidly to remove illegal or harmful content from their sites. They must implement more transparent procedures and enforce them. They must implement and enforce age-checking measures to ensure children are not seeing inappropriate content. Platforms must also provide parents accessible ways to report problems as they arise, and to allow parents the ability to make their children’s accounts more secure using parental controls. They also must analyze and publish risk assessments. Critically, the law places a legal responsibility on social media platforms to enforce the promises they make to users when they sign up, through the platform’s terms and conditions. In effect, they are legally bound to enforce their terms and conditions.

Punishment for violations could exceed fines of 18 million Pounds, or 10% of their global annual revenue, whichever is higher.

Australia

Australia also recently proposed legislation aimed at regulating and removing misinformation and illegal content, particularly for the benefit of children. The law would compel platforms to remove “cyber-abusive” information within 24 hours. This regulation would also allow the regulatory body in charge there, the Australian Competition and Consumer Commission (ACCC) and the Australian Association of National Advertisers (AANA), to obtain information and documents from digital platforms relating to disinformation and misinformation.

Indonesia

Indonesia centers its newest proposed legislation on its concern for the local markets and local vendors. Last month, the President of Indonesia announced that the Trade Ministry would be issuing regulation that would ban e-commerce on social media platforms. No specific platforms were mentioned, but TikTok is likely one of the targets. Officials have claimed that e-commerce sellers using predatory pricing on social media platforms are threatening local markets in Indonesia, with some officials specifically citing TikTok. The proposed bill prohibits direct transactions on social media sites, but vendors can still advertise products on social media. No word yet on how or when implementation would commence.

Efforts from around the globe to regulate social media and digital platforms are full of promise. Implementation and enforcement will be a challenge, as the digital world expands and mutates continually, and opposition to change by social media platforms is sure to follow. Here in the United States, there is much we could borrow and implement. From the comfortable position of early and interested observer, let’s see.

Children, however, are

Children, however, are  The

The

demand. This conduct is not illegal, but it negates the potential revenue these industries may obtain. Such a solution was, is, and consistently will be recognized as legal activity.

demand. This conduct is not illegal, but it negates the potential revenue these industries may obtain. Such a solution was, is, and consistently will be recognized as legal activity.