Are employees and employers operating in a universe without realizing the density of the fog that obscures the boundaries of the employee-employer relationship in cyberspace because the Supreme Court prefers to decide cases on narrower grounds?

Due to narrow rulings, examining decisions beyond employment law may yield analysis that can serve as temporary guideposts for employers and employees while monitoring the developing landscape.

Over a decade ago, the unanimous Supreme Court did just that. In City of Ontario, Cal. v. Quon, the Court avoided addressing the employee privacy issue by deciding that employer acted reasonably, thereby justified their non-investigatory search of an employer-issued pager in 2002. The employee brought an action for deprivation of civil rights under 42 U.S.C. § 1983. The § 1983 claim requires a governmental actor to deprive a constitutional right while acting under the color of law. The government, as the employer, issued a policy covering emails and Internet usage, but it was not specific to text messages. However, a supervisor verbally put all employees on notice that text would be considered emails, despite the difference between the technology used during transmission. Some of the non-work-related messages sent during working hours were sexual. Despite both the District Court and the Court of Appeals for the Ninth Circuit decided that the employee had an expectation of privacy in the text messages, the Supreme Court avoided addressing that issue while finding the search constitutional.

Since most of today’s labor force has never carried a pager, the more relevant aspect of this decision is the Court forecasting the “rapid changes in the dynamics of communication and information transmission” which may be evident “in the technology itself but in what society accepts as proper behavior.” How right they were, I could not have predicted the explosion of technology. Because emerging technology’s role in society was unclear, detailing the constitutionality of other actions could have been risky. Last month this preference was reinforced. However, definitive holdings could have become the foundation upon which employers and employees could make educated decisions while technology’s role in society becomes more evident. Like an airplane flying out of cloud cover, suddenly the landscape becomes visible.

The Court had the foresight that cell phone communications would become essential in self-expression that it would require employers to communicate clear policies. However, the challenge lies in setting clear policies when privacy and protected speech boundaries are not clearly defined but obscured in the fog created by balancing tests established in other speech cases.

One such landmark ruling is the 1969 “school arm-band case” during the Vietnam War. In Tinker v. Des Moines Independent Community School Dist., the Court separately analyzed the time, place, and type of be¬havior/communication. Tinker’s substantial disruption analysis requires that the prohibition on speech needs to be due to something other than just the desire to avoid discomfort and unpleasantness.

The Court in Young v. American Mini Theatres, Inc. established that speech cannot be suppressed just because society finds the content offensive. Likewise, in Skinner v. Railway Labor Executives’ Ass’n, the Court also found that the amendments to the constitution also applied to the government when performing non-criminal functions.

Likewise, the Court ruled in Treasury Employees v. Von Raab that not only did the Fourth Amendment apply to the government as an employer but that the issue of privacy applies to private-sector employees as well.

More recently, Justice Stevens addressed a public employee’s expectation of privacy in his concurring opinion in Quon. He highlighted the significant issue: there “lacks tidy distinctions between workplace and private activities.” Today’s social media and society’s view have further blurred the boundaries to the point of non-existence.

Just last month, the Supreme Court had an opportunity to establish bright lines that would have further clarified the legal landscape of social media. The rule could have applied to the employer-employee relationship. In Mahanoy Area School District v. B.L., the Court held that the school violated the student’s free speech rights because the school’s special interests did not overcome the student’s right to freedom of expression. The decision was based primarily on the time of the speech, the location from where B.L. made it, the content, and the target audience. The school’s interests also focused on preventing disruption in the facility.

Justice Alito, in his concurrence, explains that it is not prudent to establish a general First Amendment rule for off-premise student speech but rather to examine the analytical framework. While this approach serves the parties of this case and is of some value to other students, it is so narrowly tailored that it may have little precedence in other speech disputes.

Rather than a bright-line rule, the Court is building a boundary fence around the First Amendment one panel at a time. While the legal community functions within this ever-changing reality, society pays the burden until clarity is achieved.

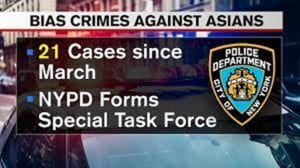

The Court’s lesson from Mahanoy might be that regulations on student speech raises serious First Amendment concerns; school offi¬cials should proceed cautiously before venturing into this territory. That same caution may be prudent for both the private sector and public sector employers. Social media’s impact is not limited to situations where a person’s post impacts their employment. One example, among many, is Amy Cooper, the Central Park 911 caller, who was immediately fired for racism and later charged with filing a false police report. She has since filed a civil suit against her employer.

The Court’s lesson from Mahanoy might be that regulations on student speech raises serious First Amendment concerns; school offi¬cials should proceed cautiously before venturing into this territory. That same caution may be prudent for both the private sector and public sector employers. Social media’s impact is not limited to situations where a person’s post impacts their employment. One example, among many, is Amy Cooper, the Central Park 911 caller, who was immediately fired for racism and later charged with filing a false police report. She has since filed a civil suit against her employer.

Unfortunately, the Court’s preference to dispose of cases narrowly while avoiding addressing all the possible issues creates tension between different interpretations until the Court adds the last panel completing the boundary fence around the First Amendment. Until then, we will have to consider how the courts will decide issues within the employment arena, such as the termination of Amy Cooper or any law enforcement officer firings due to social media posts.

Will the Courts find that employees, like students, do not “shed their constitutional rights to freedom of speech or expression” at the workplace gate in the era of social media?