Increasing technological advances and consumer demands have taken shopping to a new level. You can now buy clothes, food, and household items from the comfort of your couch, and in a few clicks: add to cart, pay, ship, and confirm. Not only are you limited to products sold in nearby stores, but shipping makes it possible to obtain items internationally. Even social media platforms have shopping features for users, such as Instagram Shopping, Facebook Marketplace, and WhatsApp. Despite its convenience, online shopping has also created an illegal marketplace for wildlife species and products.

Wildlife trafficking is the illegal trading or sale of wildlife species and their products. Elephant ivory, rhinoceros horns, turtle shells, pangolin scales, tiger furs, and shark fins are a few examples of highly sought after wildlife animal products. As social media platforms expand, so does wildlife trafficking.

Wildlife Trafficking Exists on Social Media?

Social media platforms make it easier for people to connect with others internationally. These platforms are great for staying in contact with distant aunts and uncles, but it also creates another method for criminals and traffickers to communicate. It provides a way to remain anonymous without having to meet in-person, which makes it harder for law enforcement to identify a user’s true identity. Even so, can social media platforms be held responsible for making it easier for criminals to commit wildlife trafficking crimes?

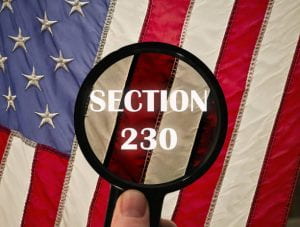

Thanks to Section 230 of the Communications Decency Act, the answer is most likely: no.

Section 230 provides broad immunity to websites for content a third-party user posts on the website. Even when a user posts illegal content on a website, the website cannot be held liable for such content. However, there are certain exceptions where websites have no immunity. It includes human and sex trafficking. Although these carve-outs are fairly new, it is clear that there is an interest in protecting people vulnerable to abuse.

So why don’t we apply the same logic to animals? Animals are also a vulnerable population. Many species are unmatched to guns, weapons, traps, and human encroachment on their natural habitats. Similar to children, animals may not have the ability to understand what trafficking is or even the physical strength to fight back. Social media platforms, like Facebook, attempt to combat the online wildlife trade, but its efforts continue to fall short.

How is Social Media Fighting Back?

In 2018, the World Wildlife Fund and 21 tech companies created the Coalition to End Wildlife Trafficking Online. The goal was to reduce illegal trade by 80% by 2020. While it is difficult to measure whether this goal is achievable, some social media platforms have created new policies to help meet this goal.

“We’re delighted to join the coalition to end wildlife trafficking online today. TikTok is a space for creative expression and content promoting wildlife trafficking is strictly prohibited. We look forward to partnering with the coalition and its members as we work together to share intelligence and best-practices to help protect endangered species.”

–Luc Adenot, Global Policy Lead, Illegal Activities & Regulated Goods, TikTok

In 2019, Facebook banned the sale of animals altogether on its platform. But this did not stop users. A 2020 report showed a variety of illegal wildlife was for sale on Facebook. This clearly shows the new policies were ineffective. Furthermore, the report stated:

“29% of pages containing illegal wildlife for sale were found through the ‘Related Pages’ feature.”

This suggests that Facebook’s algorithm purposefully connects users to pages and similar content based on a user’s interest. Algorithms incentivize users to rely and depend on wildlife trafficking content. They will continue to use social media platforms because it does half of the work for them:

-

-

- Facilitating communication

- Connecting users to potential buyers

- Connecting users to other sellers

- Discovering online chat groups

- Discovering online community pages

-

This fails to reduce wildlife trafficking outreach. Instead, it accelerates visibility of this type of content to other users. Does Facebook’s algorithms go beyond Section 230 immunity?

Under these circumstances, Facebook maintains immunity. In Gonzalez v. Google LLC, the court explains how websites are not liable for user content when the website employs content-neutral algorithms. This means that a website did nothing more than program an algorithm to present similar content to a user’s interest. The website did not offer direct encouragement to publish illegal content, nor did it treat the content differently from other user content.

What about when a website profits from illegal posts? Facebook receives a 5% selling fee for each shipment sold by a user. Since illegal wildlife products are rare, these transactions are highly profitable. A pound of ivory can be worth up to $3,300. If a user sells five pounds of ivory from endangered elephants on Facebook, the platform would profit $825 from one transaction. The Facebook Marketplace algorithm is similar to the algorithm based on user interest and engagement. Here, Facebook’s algorithm can push illegal wildlife products to a user who has searched for similar products. Yet, if illegal products are constantly pushed and successful sales are made, Facebook then benefits and makes a profit off these transactions. Does this mean that Section 230 will continue to protect Facebook when it profits from illegal activity?

Evading Detection

Even with Facebook’s prohibited sales policy, users get creative to avoid detection. A simple search of “animals for sale” led me to a public Facebook group. Within 30 seconds of scrolling, I found a user selling live coral, and another user selling an aquarium system with live coral, and live fish. The former reads: Leather $50. However, the picture shows a live coral in a fish tank. Leather identifies the type of coral it is, without saying it’s coral. Even if this was fake coral, a simple Google search shows a piece of fake coral is worth less than $50. If Facebook is failing to prevent users from selling live coral and live fish, it is most likely failing to prevent online wildlife trafficking on its platform.

Another method commonly used to evade detection is when users post a vague description or a photo of an item and include the words “pm me” or “dm me.” These are abbreviations for “private message me” or “direct message me.” It is a quick way to direct interested users to personally reach out to the individual and discuss details in a private chat. It is a way to communicate outside of the leering public eye. Sometimes a user will offer alternative contact methods, such as a personal phone number or an email address. This transitions the interaction off of or to a new social media platform.

Due to high profitability, there are lower stakes when transactions are conducted anonymously online. Social media platforms are great for concealing a user’s identity. Users can use fake names to maintain anonymity behind their computer and phone screen. There are no real consequences for using a fake name when the user is unknown. Nor is there any type of identity verification to truly discover the user’s true identity. Even if a user is banned, the person can create a new account under a different alias. Some users are criminals tied to organized crime syndicates or terrorist groups. Many users operate outside of the United States and are overseas, which makes it difficult to locate them. Thus, social media platforms incentivize criminals to hide among various aliases with little to lose.

Why Are Wildlife Products Popular?

Wildlife products have a high demand for human benefit and use. Common reasons why humans value wildlife products include:

Do We Go After the Traffickers or the Social Media Platform?

Taking down every single wildlife trafficker, and users that facilitate these transactions would be the perfect solution to end wildlife trafficking. Realistically, it’s too difficult to identify these users due to online anonymity and geographical limitations. On the other hand, social media platforms continue to tolerate these illegal activities.

Here, it is clear that Facebook is not doing enough to stop wildlife trafficking. With each sale made on Facebook, Facebook receives a percentage. Section 230 should not protect Facebook when it reaps the benefits of illegal transactions. This takes it a step too far and should open Facebook to the market of: Section 230 liability.

Should Facebook maintain Section 230 immunity when it receives proceeds from illegal wildlife trafficking transactions? Where do we draw the line?

Deficit Hyperactivity Disorder (“ADHD”) is an example of a condition that social media has jumped on. #ADHD has

Deficit Hyperactivity Disorder (“ADHD”) is an example of a condition that social media has jumped on. #ADHD has /cdn.vox-cdn.com/uploads/chorus_asset/file/23973837/adhd_ads.jpg)

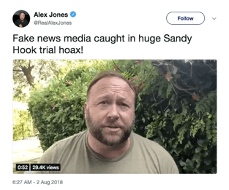

Another e-personator used their verification to impersonate former United States President

Another e-personator used their verification to impersonate former United States President